Our relationship with computers and phones has changed. We used to rely on software installed locally on our computers, and are now shifting towards a model based on services and companion apps, sometimes with free tiers and subscriptions.

Most services are provided by organisations, who collect and sometimes resell users’ information to third parties. This massive, indiscriminate, and corporate collection of personal data is called surveillance capitalism. While organisations can argue this data collection is necessary to provide their services, this comes with significant privacy implications.

Self-hosting and paid subscriptions are common strategies to escape surveillance capitalism. But what guarantees do they really offer? What alternatives exist for the general public who wants to escape surveillance capitalism, and at what cost?

Paid subscriptions are not enough

When services have a free and a paid tier, it can be tempting to think that the provider is going to sell your data on the free tier but they’re going to be mindful of it on the paid tier. Paying a subscription to your provider can sound like a good idea to keep your data safe, and it sometimes is. But it’s not necessarily the case.

As reported by The Verge, the mental health service provider BetterHelp shared customer’s email addresses, IP addresses, and health questionnaire information with third parties including Facebook and Snapchat, while promising it was private. Mental health is precisely the type of information that should remain private, making this move particularly appalling from BetterHelp.

Self-hosting, but as a service

One of the major enablers of surveillance capitalism is centralisation. In that sense, paid subscriptions don’t offer any guarantee that the provider will play fair game and keep your data private.

Self-hosting is rather efficient at preventing surveillance capitalism, mostly because the data doesn’t live in a central repository but on the self-hoster’s infrastructure. In that sense, it doesn’t directly enable surveillance capitalism. It’s important here to make a distinction between private and public information though. Posts on federated social media platforms are not centralised, but they are public and can be scraped to be exploited. The threat we’re discussing in this article is a provider growing so big it can collect and exploit data that is not public, at a large scale.

It should be noted that the vendor of the self-hosted solution could theoretically still gather information about the users by making its software send data to the mothership regardless of where it’s installed. To an extent, this can be acceptable as long as the user explicitly knows what data is sent, can opt-out, and that the minimum amount of anonymised data is sent for clearly defined purposes. This practice is known as telemetry. Open-source software allows any tech-savvy person to look up the code and check what is actually sent to the mothership. Proprietary software makes it much more difficult.

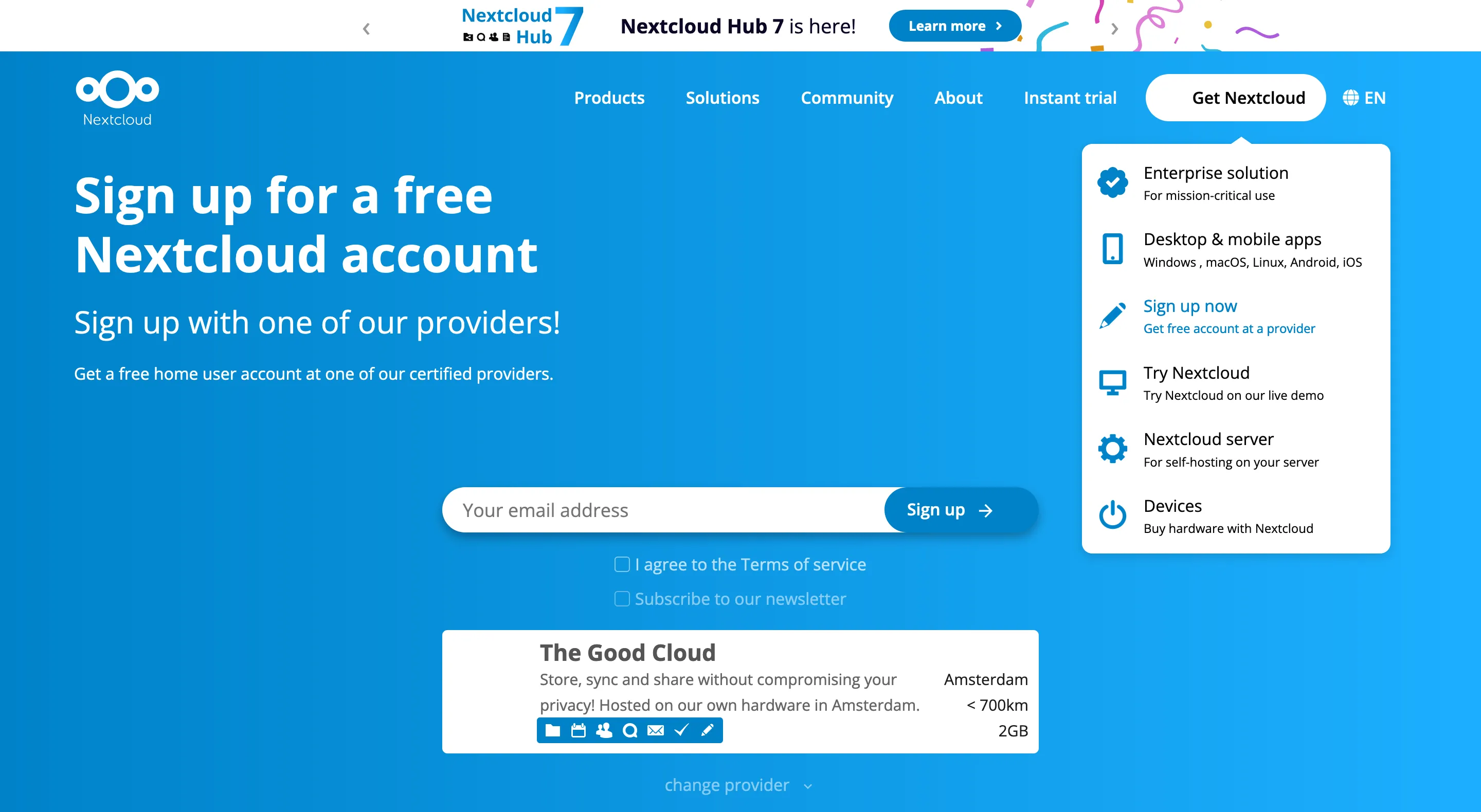

But as we discussed earlier on this blog, self-hosting doesn’t scale well because it requires time and knowledge. There’s a workaround: using software that can be self-hosted, but buying it as a service. A real life example would be the Google Drive ethical alternative Nextcloud: several providers like Ionos offer hosted Nextcloud instances. This makes solutions like these accessible (and safe!) to a broader public.

Using open-source licences means that the software can be audited, but it also allows anyone to take the code and offer it as service. This can lead to a race to the bottom in terms of cost and quality when the service providers are not playing fair game: they benefit from development work they didn’t invest resources in, all while not contributing financially or technically to the upstream project either.

If the customers of such providers encounter problems, support is often minimal: in very budget-tight environments, losing a customer can be more profitable than investigating a significant problem. Such predatory methods harm the ecosystems in which they’re deployed: customers get bad experiences, and the upstream project gets little to no benefit. Similar behaviours have been observed in the Matrix ecosystem where integrators deployed open source products without contributing anything back.

Ultimately, support contracts are an insurance for the service provider and for their customers. When the service provider pays for upstream’s support and reports an issue, the engineers who developed the product investigate the case, fix the problem, and make the fix permanent for everyone. This also allows the upstream to generate a bit of revenue, contributing to the project’s health, sustainability, and to the emergence of new exciting features.

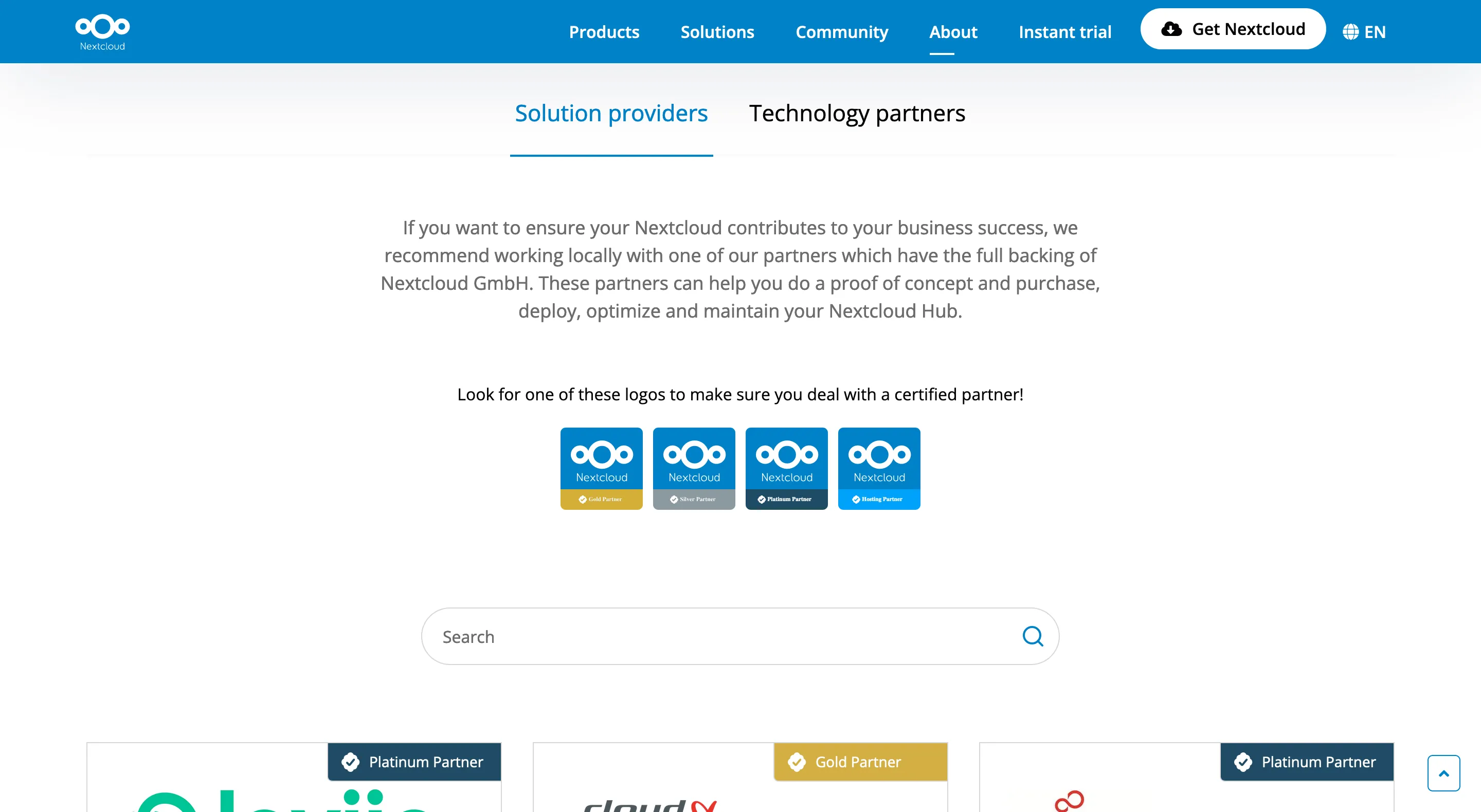

Nextcloud also allows users to sign up on third party providers directly from nextcloud.com. The sign-up feature makes it extremely easy for the user to choose a provider. Nextcloud’s Jos Poortvliet confirmed to me that it’s not a formal certification programme, but more of a group of companies Nextcloud trusts. Nextcloud doesn’t generate revenue from this programme, intentionally, since they’re not in the business of monetising private users. Certification programmes are usually very expensive to run and not necessarily profitable for vendors.

Not trusting anyone

When buying a hosted service from a provider, we enable a form of partial re-centralisation… which technically allows the provider to start selling the users’ data for profit.

There’s a third option: making sure the data can only be read by its intended recipients, turning the servers into rather dumb pipes. This is End-to-End Encryption (E2EE). It certainly sounds like a silver bullet! So why doesn’t every service provider implement it, to show their good faith? Because it has drawbacks.

When using E2EE, the files are encrypted. Nobody apart from their owner and people who have been explicitly authorised by the owner can read them. This means neither the server software nor the technical administrator of the server can read them either. This is often what users expect, but this has consequences!

The server becomes dumb

Since the server can’t read the data, there are some legitimate operations it cannot perform anymore. A typical example is “deep” search, which is functionality where the server spends computing time to read and index all the files so it’s easy for the user to query them. When files are encrypted, indexing and search can only happen on the client. Those operations are quite expensive, and the clients don’t necessarily have the computing power, connectivity or storage required to do so.

There are some new techniques such as homomorphic encryption that could eventually enable users to offload this computation to the server without the server learning anything about what it is actually doing but they are not yet ready for large-scale use.

Having a dumb server also severely limits its ability to send automated reports or alerts based on specific workflows. In a sense, the server can’t “work” for the user anymore and becomes nothing more than a backup service.

Data can become irrecoverable

With E2EE, encryption and decryption keys are stored on the device only, which is a significant risk. Let’s assume you host your important documents exclusively on an E2EE service and your keys only exist on your phone. If your phone is broken or lost, your decryption keys are lost with it.

There are three workarounds to avoid losing the keys entirely:

- Generating a “paper key” (also called “recovery key”, “seed passphrase”, or even “paper wallet” in the context of cryptocurrencies) directly on the client, and giving the user the 12 to 15 words to write down or print somewhere.

- Derive the encryption key from the user’s password. This is the approach Firefox Sync is taking for example.

- Storing the encryption keys on the server-side, in a vault encrypted by a key derived from a passphrase. The passphrase must of course be long enough to make it difficult to break by the server administrator.

The major inconvenient of these workarounds is that they require the user to either print/write a generated key and store them somewhere safe where they can recover it later, or remember a passphrase to access their en/decryption keys. If the user loses the generated key or can’t remember the passphrase, their data is lost and irrecoverable. The service provider cannot do anything about it because they can’t access the data. In other words: there’s no “forgot my password” link anymore.

The reputational risk of not being able to help users who forgot their password is often unacceptable for service providers. Even Apple who positions itself as a company respectful of their users’ privacy doesn’t turn on E2EE by default, and understandably takes a lot of precautions before allowing users to turn on actual E2EE on their iCloud account.

The “E” in E2EE doesn’t stand for “Everything”

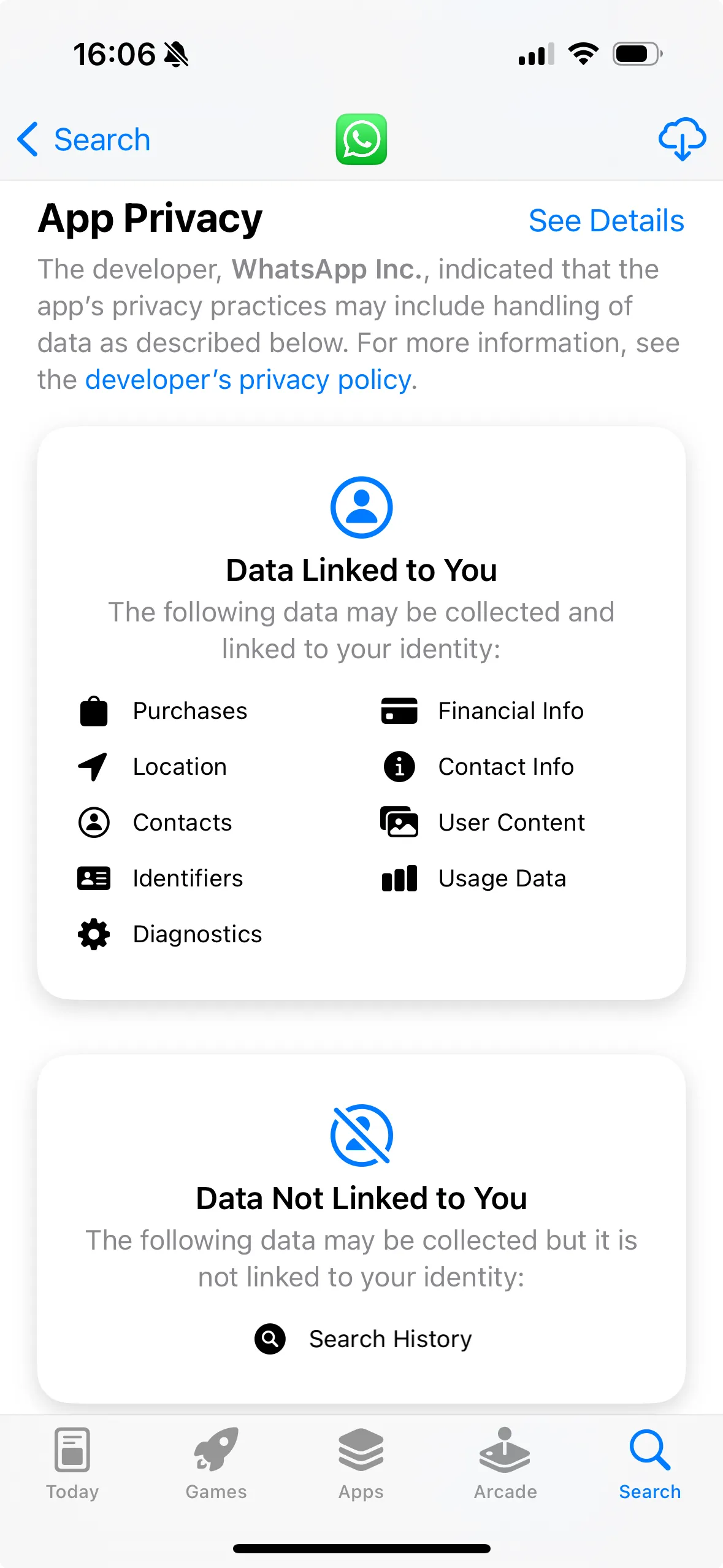

While E2EE is particularly good at preventing nosy folks from looking at files and messages themselves, it doesn’t mean everything is encrypted. In particular, metadata can be sent in clear text either because it’s necessary for the server to provide the service, or because the service providers can profit from it.

Typically, WhatsApp is an E2EE messenger, but the provider still has access to metadata. WhatsApp also has a moderation feature that allows users to decrypt an E2EE message they were sent, and send it to Meta for moderation purposes. While moderation is a valid use case which doesn’t break encryption itself, it shows that the client could in theory decrypt the message and send it to the service provider behind the user’s back.

This highlights that E2EE alone is also not enough: even if users don’t need to trust the service provider they need to be able to trust both the protocol and the client they rely on. This means the client necessarily needs to be open source and audited regularly by an independent third party.

Beyond tech

As we have seen, there are several strategies to help the general public trying to escape surveillance capitalism. Self-hosting is efficient but doesn’t scale well, paid instances of self-hostable software work generally well but are not a silver bullet, and E2EE is very useful to protect privacy but don’t provide a full guarantee either.

Ultimately E2EE is a very libertarian approach to a societal issue, taking a “myself against the world” stance. It can be a valid stance, especially for minorities and in hostile contexts. But surveillance capitalism is not a technological problem. It is enabled by technology, but at the very core it is a societal problem.

As Molly White said, “there are never purely technological solutions to societal problems”. To fight surveillance capitalism, we need E2EE, regulation, justice, and education.

We need E2EE to prevent the collection from happening in the first place. We need proper regulation to define what is acceptable or not, which will ultimately define what is a viable business model and what is not. This means that the fines must make it prohibitively unprofitable to sell users’ data. We need justice and executive bodies to actually enforce the regulation. And we need education for the general public to understand the risks of surveillance capitalism.

All my gratitude to Denis Kasak (dkasak), Denise Almeida, Benjamin Bouvier (bnjbvr), and Jonas Platte (jplatte) for their valuable time, comments and suggestions on this article .

Comments

Comments

Comments

Comments